In part 1 of this article series I have shown you 2 problems that we found in existing code (in our own code as well, and we thought that that code was perfect). Today we will discuss 3 other problems we have seen quite often. And yes, I found this in our own code as well.

Missing Assignment

Another common problem in existing code revealed by X# is with expressions that were unintentionally left incomplete, as demonstrated in this cut down sample:

#using System.Collections.Generic

FUNCTION Start() AS VOID

LOCAL aMyDictionary AS Dictionary<STRING,STRING>

aMyDictionary := Dictionary<STRING,STRING>{}

aMyDictionary["myKey"] // incomplete code

// intended code was:

// aMyDictionary["myKey"] := “myValue”

? aMyDictionary["myKey"]

Vulcan did not report any error or warning about this, leading again to unintended behavior of the code at runtime (a runtime exception). Fortunately X# reports an error though: error XS0201: Only assignment, call, increment, decrement, and new object expressions can be used as a statement which points us to the missing assignment in the code.

As we announced a couple weeks ago, we have recently (to be precise, that was in Athens, at 20:56 of December 29th, 2016 ![]() achieved a major milestone for X#: Now the compiler can successfully compile all *valid* existing Vulcan code, or at least all existing code that we have tested the compiler with. And thanks to many of you who have provided us literally many 100s of thousands of lines of your code for our testing, we have tested the compiler against A LOT of existing code!

achieved a major milestone for X#: Now the compiler can successfully compile all *valid* existing Vulcan code, or at least all existing code that we have tested the compiler with. And thanks to many of you who have provided us literally many 100s of thousands of lines of your code for our testing, we have tested the compiler against A LOT of existing code!

Of course we have tested the compiler also with our own code, like the source code for XIDE for example, which was previously written in Vulcan and even tested it with existing VO code that has not been ported to Vulcan at all. In the process, the stricter and more powerful X# compiler has revealed several problems in existing code (in the VO SDK, in the Vulcan version of the SDK, in ours and in other developers’ code), some of which should have never been allowed to compile before at all.

We have collected a of such problems, and we want to present them to you so you can learn from it

Assignment made to the same variable

This is by far the most common issue in existing code revealed by the X# compiler. Here is a simple case:

FUNCTION Absolute(nValue AS INT) AS INT

LOCAL nRetValue AS INT

IF nValue > 0

nValue := nValue // typo, should be nRetValue := nValue

ELSE

nRetValue := - nValue

END IF

RETURN nRetValue

VO and Vulcan do not report an error or warning on this, while X# does report a warning: warning XS1717: Assignment made to same variable; did you mean to assign something else?

Where is my Intellisense ?

- Fabrice

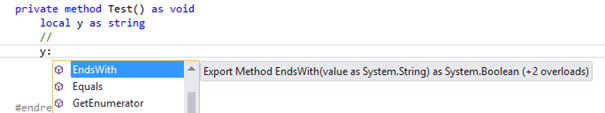

This is a question, a lot of you are asking when they start the current version of Visual Studio Integration of XSharp; and I think you must have some explanations about it.

First, Intellisense is maybe not the right term to use, because it does cover a lot of features: So, you will have to make the distinction between Autocompletion, Parameters tooltips, Goto entity, Peek definition, Code snippets, …

And unfortunately, all that doesn’t comes automatically in VS : we have to provide the services.

Most of that was done by a LanguageService in VisualStudio, prior the 2015 version; but since then, when you look at the MSDN documentation, it is now related as legacy. Most of all, the vNext generation of Visual Studio is also introducing a new way of managing the project system, and in order to keep in touch with right technology, we must now implement these features as MEF.

MEF, the Managed Extensibility Framework, is feature introduced with .NET 4.0 that allows you to Import or Export classes as a provider, and have these automatically used by the consumer: Here, Visual Studio.

Ok, very interesting but Where is my Intellisense ?

Not too far, in fact : In order to provide Autocompletion, we must know what you are typing, in which context. So we need load references of your project and (using reflection) extract all information (Class/Method/Property/Parameters/…), then walk all your source code, and build a database of Elements using the XSharp compiler.

By the way, one element is how the Database is built : C++ is using an external database using SQLite, C# is using an In-Memory model, so we have to deal with speed and memory usage : We currently have made the choice to also have an In-Memory model, but may reconsider that if some users are running low on memory with big projects.

Creating and managing that was a bit long, but we now have a strong model and the first step will be soon available.

The Needs of the Many...

- Robert van der Hulst

Last week I was on a holiday with my family in Normandy in France.

Some of the touristic highlights that we visited were of course the D-Day beaches Omaha, Utah, Juno, Gold and Sword where the allied forces started the liberation of the Northern part of Europ on June 6, 1944.

Near those beaches we also visited the war cemetaries where thousands of solders from the US, Britain, Canada and other countries were buried. This was very impressive as you can imagine.

The thought about these (mostly) young men that have given their lives to help liberate Europe, makes you feel very grateful and sad at the same time. You know that each person has left behind parents, or wife and children who have had to miss their loved sons or husbands and fathers.

At the same time the number of casualties was of course much smaller than the number of people that were killed by the occupiers. Compared to these numbers, the number of deceased allied soldiers was relatively low.

This reminded me of the famous quote from Spock in Vulcan "The needs of the wany outweigh the needs of the few, or the one".

I am sure that these soldiers and their families did not have something like that in mind, but I am glad that the allied leaders have chosen to liberate Europe, even when they could know that this would mean serious losses of allied lives.

Dilemma: how compatible do you want to be?

- Robert van der Hulst

We are reaching the end of the process to implement the BYOR (Bring Your Own Runtime) support and we are working on some of the more obscure things inside the compiler: implicit and explicit conversions and the related compiler options, such as /vo4 and /vo7.

Numeric comparisons

If you would ask any normal person which number is bigger: -1 or any arbitrary positive number, then most people will say that -1 is smaller. Now ask the same thing to a computer and you may receive diferent and unexpected answers. Consider the following example program:

FUNCTION Start AS VOID LOCAL dwValue AS DWORD LOCAL liValue AS LONG liValue := -1 dwValue := 1 ? liValue < dwValue RETURN

Compile this code in Vulcan and it will compile without warnings, but the result will be that liValue (-1) is NOT smaller than dwValue (1).

Dilemmas: when is code correct ?

- Robert van der Hulst

In this Blog article I would like to share some of the dilemmas that we met when creating X#. Especially some dilemmas where we discovered that the VO/Vulcan compiler allowed things that other languages, such as C# and VB, did not allow.

Case 1

CLASS ParentClass

VIRTUAL METHOD OnTest() AS STRING

? 'parent'

RETURN 'parent'

END CLASS

CLASS ChildClass INHERIT ParentClass

METHOD OnTest() AS STRING

? 'child'

RETURN 'child'

END CLASS

FUNCTION Start() AS VOID

LOCAL o AS ParentClass

o:= ChildClass{ }

? o:OnTest()

RETURN

When you compile this code without any "special" compiler options what would you expect ?

Best wishes for 2016

- Robert van der Hulst

From the Devteam meeting in Athens we wish all our customers and supporters a very succesfull 2016.

We are looking forward to an exciting year:

- End of January the first public beta of XSharp will be released.

- End of April the official launch of the product will be at the Xbase.Future 2016 conference in Cologne (what used to be the Vulcan.Live conference). Come join us there and see the exciting new features in X#.

The devteam in front of the Parthenon on the Acropolis in Athens

Fabrice Foray

Nikos Kokkalis

Chris Pyrgas

Robert van der Hulst

Runtime Optimizations in X#

- Chris Pyrgas

Some of the many items in our TODO list for Vulcan.NET had to do with optimizing the runtime and adding some missing functionality (like passing USUALs by reference to CLIPPER methods) to it. We had already implemented some, like improving performance of the late binding system, but for obvious reasons we were not able to make any further improvements in Vulcan.NET. But now, with the fresh start of XSharp, it is a great opportunity to make things right and fast from the beginning. In this article we will discuss optimizations planned or already implemented on the USUAL, FLOAT and ARRAY data types, more about other types and parts of the runtime will follow in future articles.

The problem with the USUAL type

In Vulcan.NET, the VO-compatible USUAL type is defined as a structure (value type), which makes sense, as it is easier this way to emulate the semantics of the USUAL type, the same way as it behaves in VO. Structures are supposed to be lightweight, compact data types, usually a few bytes long, in order to achieve good performance when copying values from one to another, or passing them as parameters to methods etc. Examples of very common structures used in the system classes are System.Drawing.Point (8 bytes long) and Rectangle (16 bytes long).

The problem with the way the USUAL type is implemented in Vulcan.NET, is that it’s much longer than that. It is easy to check its size, with a simple line of code:

? SizeOf(USUAL)

Page 3 of 4